(*NOTE: One owner of a 27" iMac with the 675MX reported 720MHz core clock rating when he ran CUDA-Z benchmark. However LuxMark rated the same iMac at 324MHz. Since we can't be sure if those apps are reporting correctly, we used NVIDIA's rating for the 675MXs core clock in the table above.)

KEY INSIGHTS from the SPECS

1. The 680MX has twice the video memory of the 675MX. In our testing, we used OpenGL Driver Monitor to confirm that the Heaven Benchmark (best settings, 8x AA, 16x Aniso, 2560x1440 rez) was using more than 1G of VRAM. Ditto for Apple's Aperture pro app when running the Noise Ninja plugin to removed noise from 50 raw images. We emphasize this because the use of VRAM will not diminish in the future. It will increase.

2. The 680MX has almost twice the Texture Fillrate. That implies twice the potential when running a graphics intensive app with complex textures being rendered and stored by the GPU -- though it's often true that graphics intensive apps don't saturate the full bandwidth.

PERFORMANCE COMPARISON

One big caveat. The "remote mad scientist" that volunteered to send us results for a 'late 2012' iMac equipped with the GeForce GTX 675MX was running OS X 10.8.3 beta. Our lab's iMac with the 680MX is running 10.8.2. His iMac was running a newer version of the NVIDIA driver with performance optimizations lacking in our version. But we are desperate to get you some performance numbers so we are publishing them anyway.

Unigine's Heaven Benchmark 3.0 has proven to be a reliable predictor over overall OpenGL performance. It stresses the GPU as it flies through 26 scenes of a village, a ship, and floating islands using advanced ambient occlusion, volumetric clouds, and various lighting conditions with refraction.

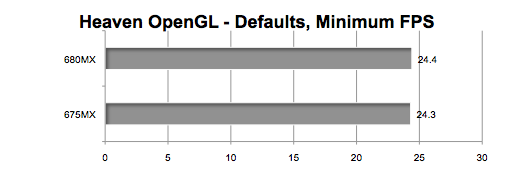

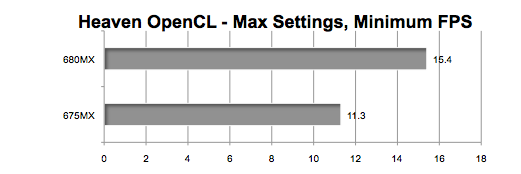

First we used the DEFAULT settings including 2560x1440 resolution, no AA, 4x Anisotropy, Medium quality Shaders, High Quality Textures, Tessellation disabled, Occlusion, Refraction, and Volumetric enabled. We posted the minimum rather than the average frames per second (FPS). (LONGER bar means FASTER.)

As you can see, it was basically a "tie." Then we "amped up" the settings using 2560x1440 resolution, 8x AA, 16x Anisotropy, High Quality Shaders, High Quality Textures, Tessellation disabled, Occlusion, Refraction, and Volumetric enabled. No longer a "tie."

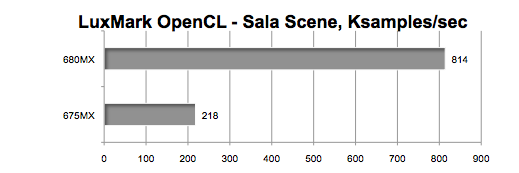

LuxMark 2.0 is an OpenCL benchmark that uses a path tracer to measure the thousands of parallel paths used by the GPU to render a scene. That's OpenCL, not OpenGL.

We used the Sala scene which contains 488,000+ triangles. The result is measured in thousands of samples per second. (LONGER bar means FASTER.) Shocking difference, eh?

GRAPH LEGEND

680MX = GeForce GTX 680MX GPU installed in our 'late 2012' (27") iMac 3.4GHz Core i7

675MX = GeForce GTX 675MX GPU installed in a late 2012 (27") iMac 3.4GHz Core i7

PERFORMANCE INSIGHTS

1. Heaven's OpenGL showed the 680MX's advantage increasing as the quality of settings increased. Plus, as mentioned earlier, the extreme Heaven settings utilized more than 1G of VRAM, giving the 680MX an advantage. The counterpoint is, "Is it the norm to run 3D animation or games at those extreme settings?" Probably not, but it will be interestng to see what kind of gap is revealed when we test with real world apps.

2. The OpenCL benchmark (LuxMark) result was the most surprising. We did not expect the 680MX to run almost four times as fast as the 675MX. Why is this important? If you are running apps such as Motion, Final Cut Pro, and Photoshop that use OpenCL accelerated effects, then this is a key metric.

This is a very limited snapshot of the relative graphics performance. And it's flawed because the two iMacs were running different versions of OS X (and therefore different versions of the NVIDIA driver). But we were desperate to get some kind of performance comparison.

NOT ROCKET SCIENCE

It would seem to me that if you have decided to buy top model of 27-inch 'late 2012' iMac and you are down to the decision of which GPU to get, the GTX 680MX should be a no brainer. Just looking at the specs chart at the top of this article should make it clear that the 680MX is worth the additional $150.

Think of it this way. For 7.5% over the base price ($1999) of the 3.2GHz Core i5 27" iMac, you get a GPU that is 20% to 273% faster. If you are ordering the 3.4GHz Core i7 27" iMac with 16G of RAM and 1TB Fusion Drive (or $2649), the 680MX GPU option only adds 5.7% to the price.

If your budget is tight, you can offset the price of the GTX 680MX by ordering your 27" iMac with less memory or without AppleCare. You can add both later. You can NOT add a better GPU later.

SEE TAKE TWO

On February 22nd, 2013, we tested both GPUs running two new OpenGL benchmarks, Heaven 4.0 and Valley 1.0.

Thoughts? Questions? Got GTX 675MX? Contact

Also, you can follow him on Twitter @barefeats